The Power Bottleneck: Why Electricity Will Limit AI Growth

AI demand is growing faster than the world’s electrical infrastructure — and that will reshape where compute gets built.

Introduction

The biggest constraint on artificial intelligence is not chips.

It’s electricity.

Training frontier AI models already requires hundreds of megawatts of power, and the next generation of compute clusters could demand gigawatts.

That is the equivalent electricity consumption of entire cities.

Yet while AI demand is scaling exponentially, electrical infrastructure expands slowly — often over decades.

The result is a growing power bottleneck that will shape where the next generation of AI infrastructure gets built.

Understanding this constraint is essential for investors trying to make sense of the AI boom.

AI Compute Is Becoming Gigawatt-Scale

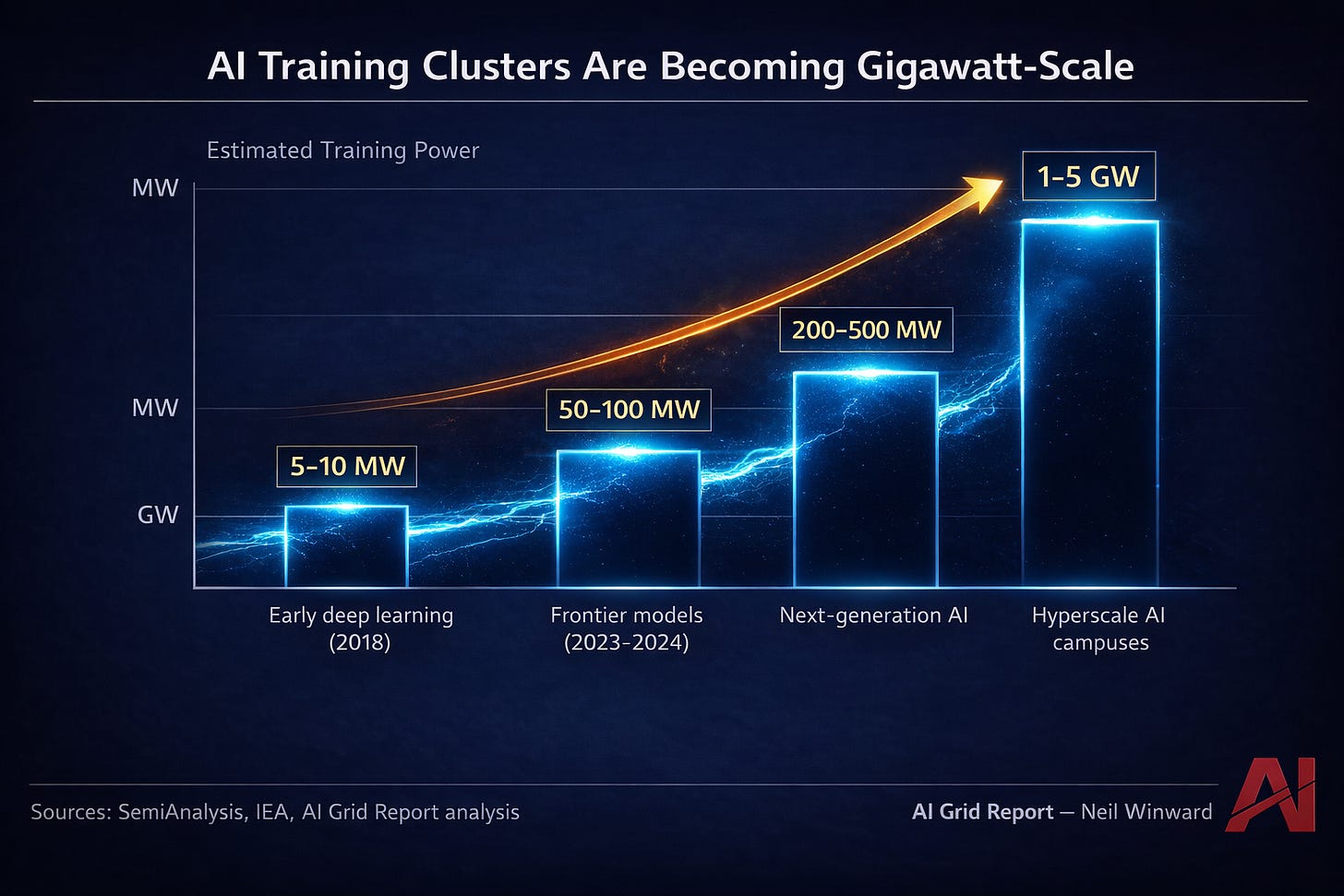

The scale of AI training infrastructure has expanded dramatically in just a few years.

Early machine-learning clusters consumed only a few megawatts of electricity. Today’s frontier AI models require vastly larger compute environments supported by massive data center campuses.

The shift is occurring at remarkable speed.

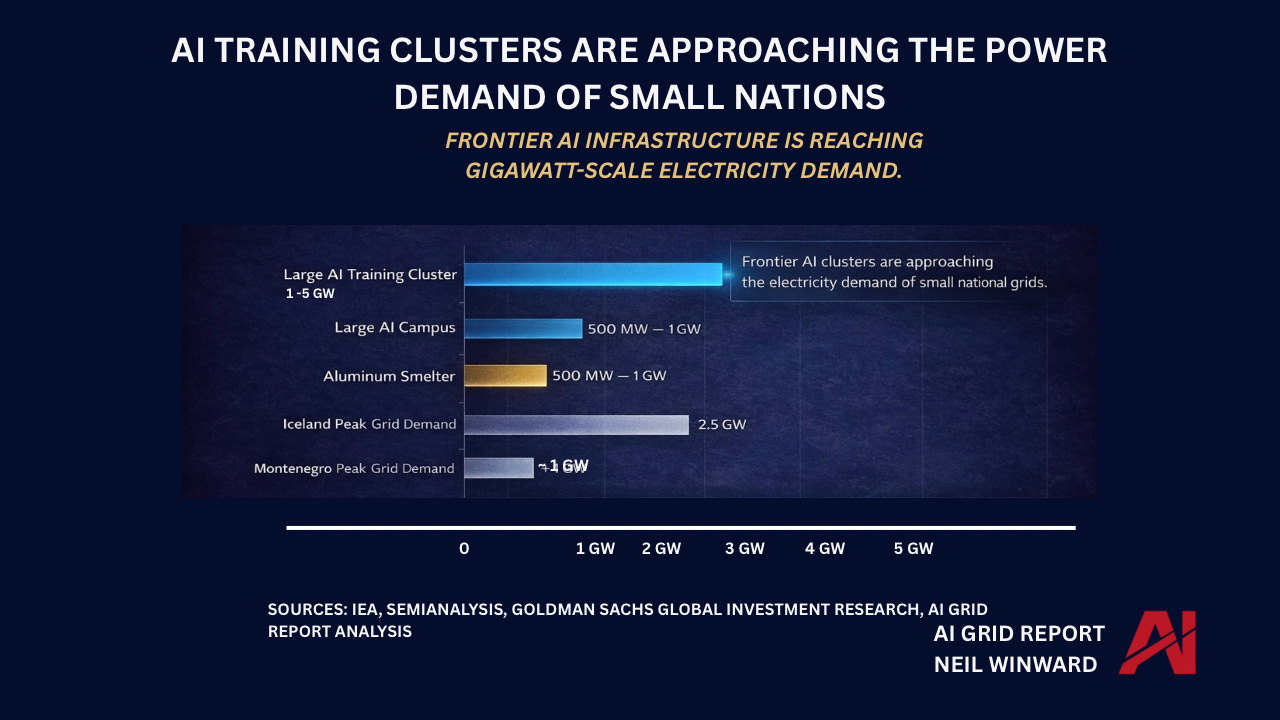

Large AI training clusters now rival heavy industrial facilities.

The largest planned AI campuses are expected to require 1 to 5 gigawatts of electricity.

To put that in perspective:

• 1 gigawatt can power roughly 750,000 homes

• 5 gigawatts approaches the electricity demand of entire metropolitan regions

AI infrastructure is quickly becoming one of the most energy-intensive sectors of the global economy.

Electricity Infrastructure Moves Slowly

While AI demand is expanding rapidly, the physical systems that supply electricity evolve much more slowly.

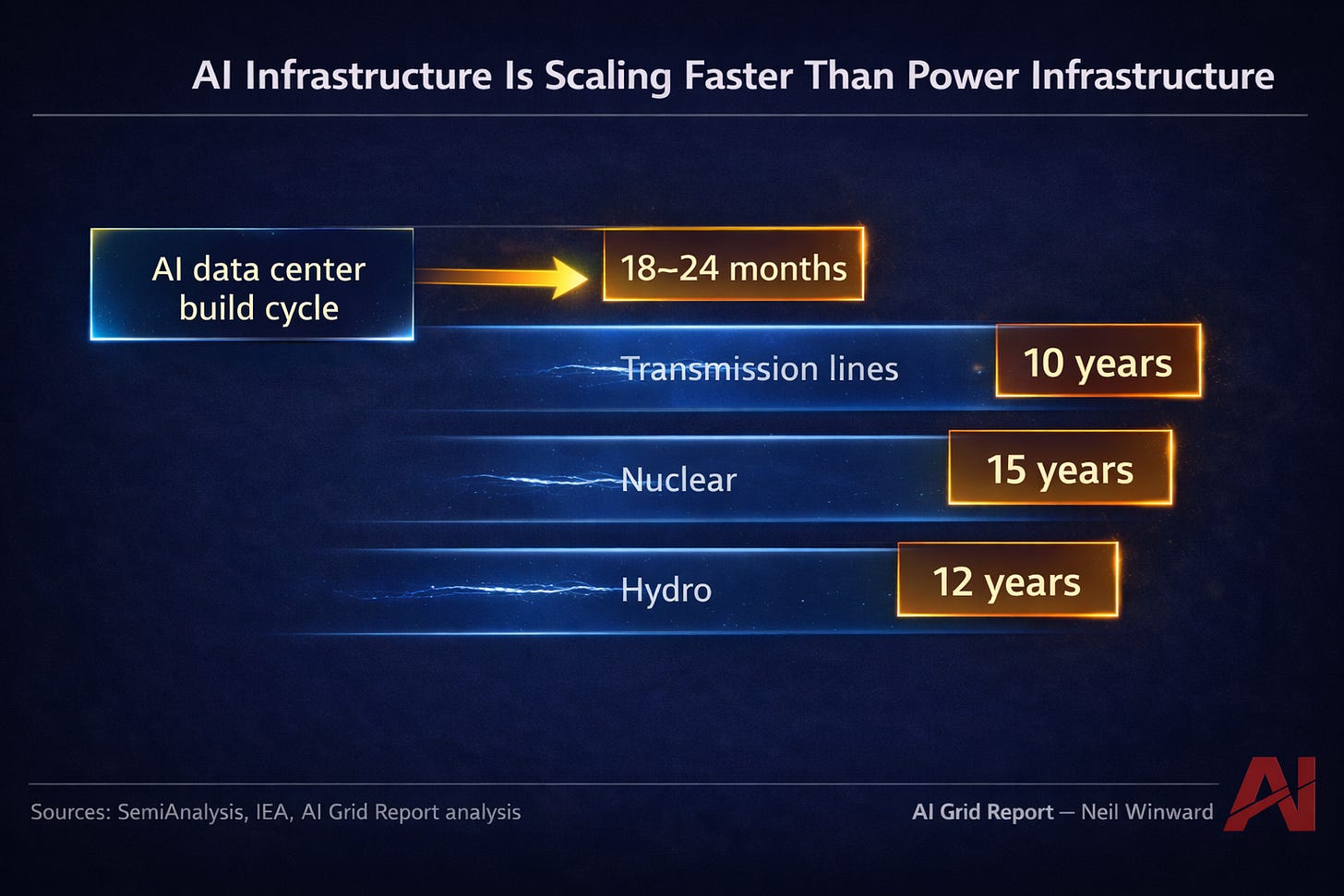

Electrical infrastructure projects require years of permitting, construction, and grid integration.

Power plants and transmission networks cannot be scaled overnight.

Electricity infrastructure expands over decades, while AI data centers can be deployed in just a few years.

This mismatch between AI growth timelines and energy infrastructure timelines is now becoming one of the defining constraints of the AI economy.

While new AI facilities can be deployed in just a few years, the electrical systems required to support them often take a decade or more to expand.

As a result, AI infrastructure must concentrate in regions where sufficient electricity already exists.

The Geography of AI Compute

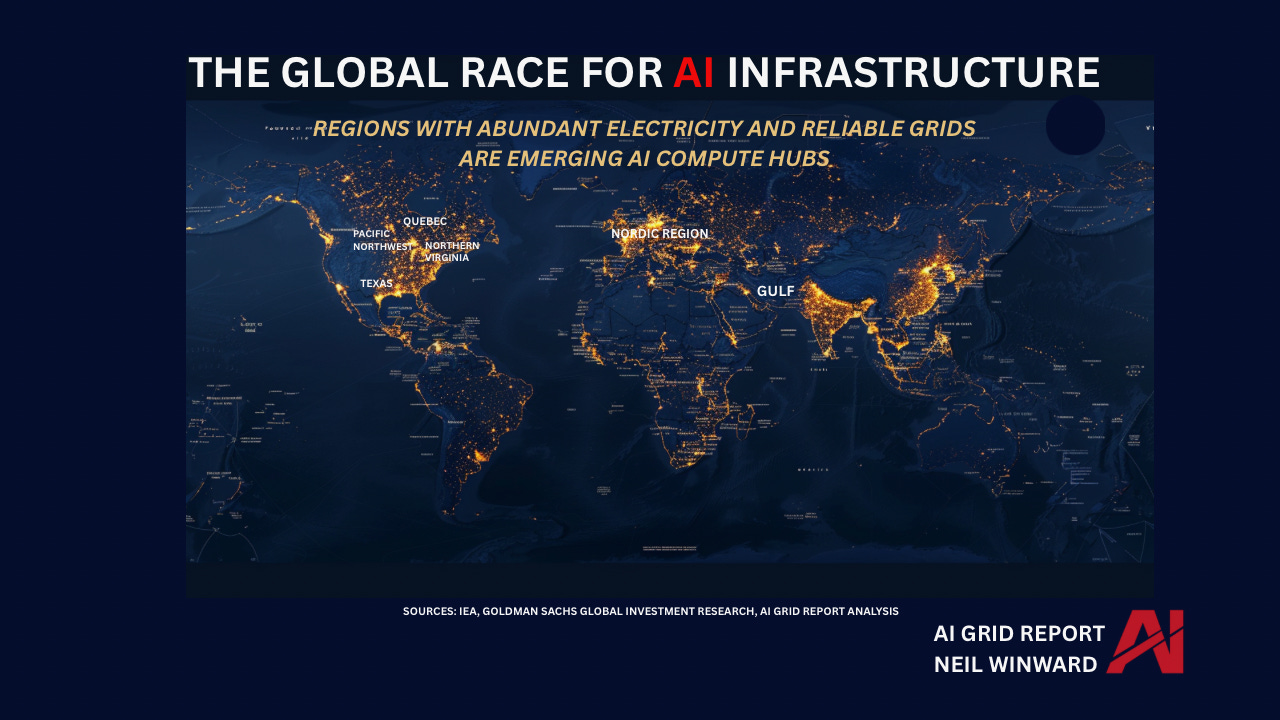

Because electricity infrastructure cannot expand quickly everywhere, AI infrastructure is clustering in locations that already possess abundant energy resources.

These regions share several important characteristics:

• reliable electrical grids

• large existing power generation capacity

• competitive industrial electricity prices

• favorable infrastructure permitting

Regions that meet these criteria are emerging as global AI compute hubs.

These regions already possess the large-scale electrical infrastructure necessary to support massive AI data centers.

In many cases they also benefit from:

• hydroelectric power

• natural gas availability

• cooler climates

• existing data center ecosystems

As a result, they are increasingly attracting the next wave of AI investment.

AI Data Centers Are Becoming Industrial-Scale Energy Consumers

The electricity requirements of AI infrastructure are now approaching those of major industrial sectors.

Large AI campuses can require power comparable to:

• aluminum smelters

• steel manufacturing plants

• large petrochemical complexes

In other words, AI is becoming an industrial energy consumer.

This shift represents a profound transformation in how digital infrastructure interacts with energy systems.

For decades, the internet economy consumed relatively modest amounts of electricity compared to traditional industry.

Artificial intelligence is changing that equation.

The Strategic Implication

The global race for AI will not be decided only by:

• semiconductor manufacturing

• software innovation

• venture capital

It will increasingly be decided by something far more fundamental.

Electricity.

Countries and regions that possess abundant power generation capacity, reliable grids, and scalable energy infrastructure will enjoy a structural advantage in hosting the next generation of AI compute facilities.

The power bottleneck is no longer theoretical.

It is already shaping the geography of the AI economy.

Conclusion

Artificial intelligence is often described as a software revolution.

But beneath the algorithms lies a much more physical reality.

AI runs on electricity.

As demand for compute accelerates, the world’s electrical infrastructure will become one of the most important constraints on technological growth.

For investors, policymakers, and technology companies alike, the lesson is becoming clear:

The future of artificial intelligence will be built not only in code — but in power plants, transmission lines, and electrical grids.

You'll love this one:

https://substack.com/profile/17500538-neil-winward/note/c-236064357?r=af3i2&utm_source=notes-share-action&utm_medium=web

I would love a follow-up on how states are competing against each other to get data centers - and what legislation they are purposing to control consumer costs - huge hot button issue in statehouses across US